A popular self-driving car dataset is missing labels for hundreds of pedestrians

A popular self-driving car dataset is missing labels for hundreds of pedestrians

A popular self-driving car dataset is missing labels for hundreds of pedestrians

As we train more and more AI models on "big" data, that bigness starts to become a curse. If a dataset is big enough, we can spot-check it at best. Sometimes, people don't even do that.

This makes it extremely dangerous to work with large datasets that you haven't personally reviewed, as you never know what they do/don't include.

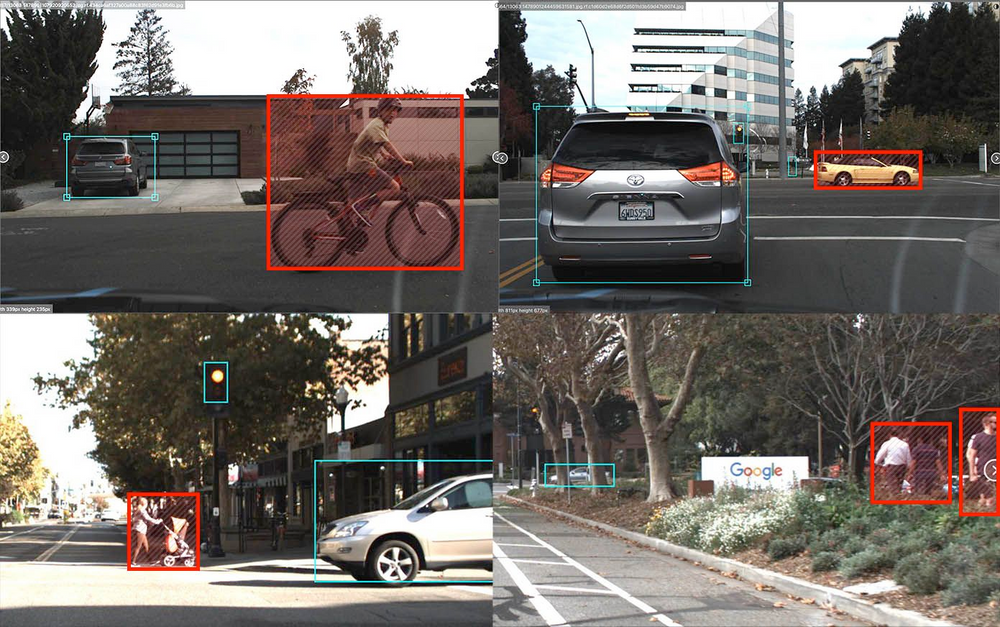

We were surprised and concerned when we discovered that a popular dataset (5,100 stars and 1,800 forks) being used by thousands of students to build an open-source self driving car contains critical errors and omissions. We did a hand-check of the 15,000 images in the widely used Udacity Dataset 2 and found problems with 4,986 (33%) of them. Amongst these were thousands of unlabeled vehicles, hundreds of unlabeled pedestrians, and dozens of unlabeled cyclists. We also found many instances of phantom annotations, duplicated bounding boxes, and drastically oversized bounding boxes. Perhaps most egregiously, 217 (1.4%) of the images were completely unlabeled but actually contained cars, trucks, street lights, and/or pedestrians.

Source: https://blog.roboflow.ai/self-driving-car-dataset-missing-pedestrians/

Related Discussion: https://news.ycombinator.com/item?id=22298882